Authors, filmmakers, and television programs have given us visions of robots serving humanity for most of the past 100 years. Some of the most iconic fictional ones include the benevolent Robby in the 1956 movie “Forbidden Planet,” the chatty C-3PO from all six of the “Star Wars” films, and the humanoid Commander Data from “Star Trek: The Next Generation” – sentient beings that can freely move, converse, and even reason. Actual robot technology has not reached that level yet, but continues to make rapid advances in the military, security, manufacturing, and healthcare fields. This year alone has seen many new medical applications. This overview article will examine some of the newest uses of medical robotics alongside some now-standard uses.

A Little History

As the world’s population ages, medical and home health groups seek new ways to address the issue. A new science fiction film opened in late August called “Robot & Frank,” introducing a household healthcare robot to help keep tabs on, and to improve the physical and mental health of an elderly man.

A similar, although far more primitive, mobile robotic assistant for the elderly was created in 1999 by a multi-disciplinary, multi-university project with researchers from the University of Pittsburgh, the University of Michigan, Ann Arbor, and Carnegie Mellon University, Pittsburgh, PA, who call their mobile robot a “Nursebot.” The in-home-use Nursebot had two primary functions: reminding people about such daily activities as eating, drinking, taking medicine, and using the bathroom; and guiding them through their environments.

A different non-mobile elderly companion robot was developed by researchers at The National Institute of Advanced Industrial Science and Technology (AIST), Kimura Clinic, and Brain Functions Laboratory in Japan. Called Paro, it’s a cute and cuddly therapeutic baby harp seal-shaped companion for those with cognitive disorders, such as Alzheimer’s. (See Figure 1) The current Paro, which responds with movement and sound to human interaction, is the eighth generation of a design that has been in use in Japan since 2003. By reacting to voice, it motivates people to remain communicative. Paro is marketed within Japan and the US.

Robot-Assisted Surgery Continues to Expand

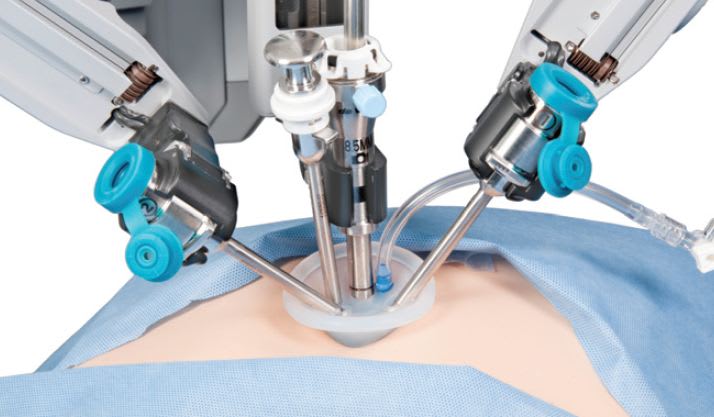

In the surgical arena, the da Vinci® surgical system has been assisting surgeons since 1999, when it was introduced by Intuitive Surgical, Inc., Sunnyvale, CA. The system enables surgeons to create minimally invasive incisions allowing for shorter surgeries, especially coronary, urological, colorectal, and gynecological surgeries. (See Figure 2)

The system consists of a cart with four interactive robotic arms controlled by a surgeon seated at a console. Three arms hold tools and act as electrocautery instruments as well as scalpel and scissors. The fourth arm is equipped with an endoscopic camera, which give the surgeon a full vision of what he or she is operating on from the console. The latest system, the Si, released in 2009, has dual console controls, allowing two surgeons to work collaboratively or for train ing new surgeons.

The original prototype for the da Vinci System was developed in the late 1980s at the former Stanford Research Institute with grant support from the U.S. Army and NASA. Today, more than 1,840 da Vinci systems are installed in more than 1,450 hospitals worldwide. See a video of the system in use at http://www.techbriefs.com/tv/davincigrape .

A new surgical robot-assisted system was approved in July 2012. The CorPath 200 System, developed by Corindus Vascular Robots, Natick, MA, received FDA 510(k) clearance to be used to assist interventional cardiologists in performing percutaneous coronary interventions, a procedure to restore blood flow to blocked arteries in patients with coronary artery disease.

In a traditional operation, the surgeon would need to wear a heavy lead apron for protection from the high radiation present in the cath lab, which could lead to back strain and potential radiation exposure. The CorPath 200 System, shown in Figure 3, consists of a robotic drive and single-use cassette mounted on an articulating arm attached to the cath lab patient table. The physician control console is located in a separate radiation-shielded cockpit and allows the physician to precisely control the coronary guidewires and stent/balloon catheters using simple touch-screen and joystick controls. Since the cockpit is shielded, the cardiologist sits in comfort. A video of the CorPath system can be seen at http://www.techbriefs.com/tv/corpathsystem . In August, Royal Philips Electronics, Amsterdam, the Netherlands, signed an agreement to be the exclusive distributor of the CorPath 200.

In the past few years, robotic systems for orthopedic surgeries have sprung up as well. In 2009, Mako Surgical Corp., Ft. Lauderdale, FL, introduced its RIO™ Robotic Arm Interactive Orthopedic System to make bone and tissue sparing partial knee resurfacing available to a growing population of patients with early to mid-stage osteoarthritis of the knee — a less invasive treatment option than total knee replacement. MAKO’s robotic arm system is the first FDAcleared robotic arm system for orthopedic surgery.

The following year, Omnilife science Inc., East Taunton, MA, acquired Praxim’s APEX surgical robot for total knee replacement. This robotic system allows intra-operative customization to accurately place the knee implant. It uses patented technology that creates a precise virtual 3D model of the patient’s anatomy, and provides ideal positioning of an automated bone-cutting guide for leg alignment.

Telemedicine Assistants Brings Remote Doctors to Patients

This summer, InTouch Health, Santa Barbara, CA, announced its new RPVITA (Remote Presence Virtual + Independent Telemedicine Assistant) telepresence robot, which features state-of-the-art mapping and obstacle detection and avoidance technology for navigation and the ability to interface with diagnostic devices and access electronic medical records. (See Figure 4) The RP-VITA is the first product of a yearlong collaboration with iRobot Corp., the company that makes the Roomba vacuum cleaner and a range of robots used to defuse explosives in Iraq and clean up ruined nuclear reactors in Japan.

RP-VITA includes a simple iPad user interface for control and interaction, and secure networking technology. A faraway doctor can simply tap a patient’s name or room number on a hospital map, and the robot finds its way to the patient and pulls up clinical data for the doctor to assess. High-resolution audio and video and laser pointers allow the doctor to examine the patient remotely. Its “head” contains a monitor that displays video of a remote doctor and provides a way for the correct expert to be present and to coordinate with others on the patient at the same time, especially in emergency or critical situations. A video of the robot can be seen at http://www.techbriefs.com/tv/RP-VITA .

InTouch says that its robots are now in 500 hospitals and have 60,000 high acuity consults a year, and growing, up from 300 hospitals and 20,000 consults two years ago.

Turning Thoughts into Action for Stroke Patients

For most people, a thought precedes an action. But for stroke victims who have lost the full use of their limbs, that action can be very difficult. Scientists at Rice University, the University of Houston (UH), and The Institute for Rehabilitation and Research (TIRR) Memorial Hermann Healthcare System, all in Houston, TX, are teaming up to help victims recover that ability to the fullest extent possible.

The multi-disciplinary team is working on a new noninvasive brain-machine interface to a robotic orthotic device for arm rehabilitation. The combination technology will use electroencephalograph (EEG) devices to translate brain waves from subjects into control outputs allowing stroke survivors to operate an intelligent exoskeleton worn from fingertips to elbow to perform repetitive motions. The willing use of repeated motions will retrain the brain’s motor networks, they claim.

Researchers at Rice are developing the exoskeleton, and those at UH are working on the EEG-based neural interface. Later, the device will be validated by UT Health physicians at TIRR Memorial Hermann, all through a grant funded by the National Institutes of Health and the President’s National Robotics Initiative, a collaborative partnership by the NIH, National Science Foundation, NASA, and the U.S. Department of Agriculture.

Team leader José Luis Contreras- Vidal, director of the UH Laboratory for Noninvasive Brain-Machine Interface Systems, was the first to successfully reconstruct 3D hand and walking movements from brain signals recorded using an EEG brain cap. That technology allows users to control robotic legs with their thoughts, and below-elbow amputees to control neuro-prosthetic limbs.

“The capability to harness a user’s intent through the EEG neural interface to control robots makes it possible to fully engage the patient during rehabilitation,” Contreras-Vidal said. “The EEG technology will also provide valuable real-time assessments of plasticity in brain networks due to the robot intervention — critical information for reverse engineering of the brain.”

Artificial Brain Controls Handling Objects

Researchers at the University of Granada in Spain in collaboration with other European institutions have developed an artificial cerebellum (a biologically- inspired adaptive microcircuit) to control a robotic arm with human-like precision, they say.

Previously, robotics researchers achieved very precise movements, but they are performed at high speed, require strong forces, and consume a lot of power. So, the Granada research team says that those models cannot be applied to robots interacting with humans, as a malfunction may be dangerous.

To solve this challenge, they have implemented a new cerebellar spiking model that adapts to corrections and stores their sensorial effects. The developers of the new cerebellar model use a robot that performs automatic learning by extracting the input layer functionalities of the brain cortex. In addition, they developed two control systems that enable accurate control of the robotic arm during object handling. This model combines an error training approach with predictive adaptive control.

The Better to See You: Improving Robot Vision

Using piezoelectric materials, mechanical engineers at Georgia Institute of Technology, Atlanta, say that they have replicated the muscle motions of the human eye to control camera systems designed to improve the operation of robots. This could potentially make robotic tools safer and more effective for MRI-guided surgery and rehabilitation. The centerpiece of the new control system is a piezoelectric cellular actuator that uses a novel technology to allow a robot eye to move more like a human eye, which could make video feeds more intuitive.

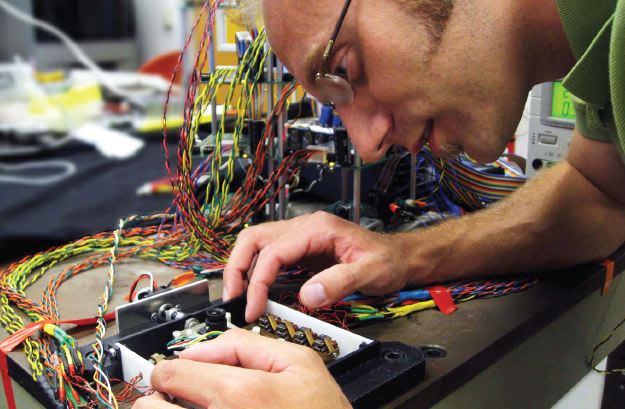

“For a robot to be truly bio-inspired, it should possess actuation, or motion generators, with properties in common with the musculature of biological organisms,” says PhD candidate Joshua Schultz, shown in Figure 5. “The actuators developed in our lab embody many properties in common with biological muscle, especially a cellular structure. Essentially, in the human eye, muscles are controlled by neural impulses. Eventually, the actuators we are developing will be used to capture the kinematics and performance of the human eye.”

The cellular actuator concept developed by the team connected many small actuator units in parallel. They worked with 16 amplified piezoelectric stacks per side using a contractile ceramic to generate motion. Multiple stacks addressed the need for more layers of amplification.

This research was presented in late June at the IEEE International Conference on Biomedical Robotics and Biomechatronics in Rome, Italy. Future research by this team will focus on the development of a design framework for highly integrated robotic systems, such as medical and rehabilitation robots.

These Robotic Legs Are Made for Walking

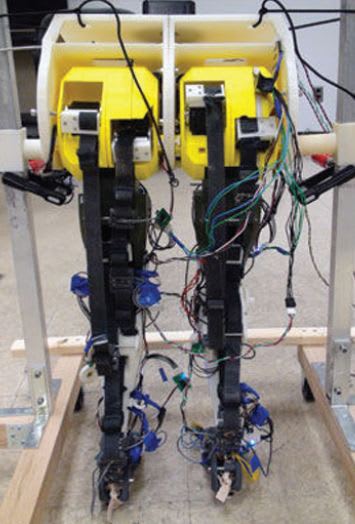

A group of researchers from the Robotics and Neural Systems Laboratory at the University of Arizona, Tucson, have produced a robotic set of legs that they believe is the first to fully model walking in a biologically accurate manner. (See Figure 6) The neural and musculoskeletal architecture, along with sensory feedback pathways have been simplified and built into the robot, giving it a human-like walking gait. A video demonstrating the robot legs can be viewed at http://www.techbriefs.com/tv/roboticlegs .

Published in July in the Institute of Physics’ Journal of Neural Engineering, the authors say that a key component of the human walking system is the central pattern generator (CPG), a neural network in the spinal cord that generates rhythmic muscle signals. The CPG produces and controls signals by gathering information from different parts of the body responding to the environment, which is what allows humans to walk without thinking about it.

The simplest form of a CPG is a half-center consisting of two neurons that fire signals alternately, producing a rhythm. The university robot contains an artificial half-center and sensors that deliver information back to the half-center, including load sensors that sense force in the limb when the leg presses on a surface. Each leg of the robot consists of a hip, knee, and ankle moved by nine muscle actuators. A rotating motor pulls on Kevlar® straps to simulate muscle contractions. Each strap features a load sensor that models a leg tendon, senses tension when a muscle is contracted, and sends signals to the brain about how much force is being exerted and where.

The researchers theorize that babies begin with a simple half-center, similar to the one developed in this robot, and over time learn a more complex walking pattern. An underlying network may explain how people with spinal cord injuries can regain walking ability if properly stimulated soon after injury, they theorize.

So Easy a Toddler Can Use It?

Due to increasing muscle weakness, children with neuromuscular diseases often have trouble moving their arms. To address this problem, the Wilmington Robotic Exoskeleton (WREX), an orthosis that helps children with very little residual arm strength, was conceived and developed at the Alfred I. duPont Hospital for Children, Wilmington, DE.

The WREX assistive device is primarily intended for those with muscular dystrophy, spinal muscular atrophy, and arthrogryposis — a rare congenital disorder. The unit is mounted to a person’s wheelchair or to a body jacket, and consists of a two-segment exoskeletal arm made of hinged metal bars using resistance elastic bands that assist in voluntary arm movement and allows full passive range of motion. It can easily be customized to accommodate subjects of different size, weight, and arm lengths by changing the number of bands or sliding the telescoping links. Over time, the device was made progressively smaller to fit patients as young as six years of age, but was too heavy and bulky for those younger.

When a two-year-old named Emma, suffering from arthrogryposis, who could walk but not lift her arms to feed, play, or hug, needed to be fitted with the WREX, its designers needed to create a smaller and more lightweight version than was possible with traditional tools. They turned to 3D printing to design and create a prototype WREX in ABS plastic. The resulting parts were lightweight enough to attach to a little plastic vest for her, as shown in Figure 7. To see a video of Emma using what she calls her “Magic Arms,” go to http://www.techbriefs.com/tv/emmaWREX .

Due to the ease of manufacturing, the exoskeleton can grow with the child, which makes 3D printing especially exciting for those working in pediatric care. In addition, broken parts can be easily replicated and replaced. (See Figure 8) Designs can be worked out in CAD and built the same day. So far, 15 youngsters have received a custom 3D-printed exoskeleton.

Conclusion

Judging by these recent advances in robotics in the field of healthcare, there is no limit to their potential usefulness. According to author Isaac Asimov’s “Three Laws of Robotics: A robot may not injure a human being or, through inaction, allow a human being to come to harm. A robot must obey orders given it by human beings except where such orders would conflict with the First Law. A robot must protect its own existence as long as such protection does not conflict with the First or Second Law.”

Although written for a work of fiction in 1942, many robotic uses currently in practice or being developed today far exceed the scope of what science fiction writers envisioned at the time. Future medical robots may someday be programmed to adhere to the Hippocratic Oath as well.

This article was compiled from various sources, including the University of Pittsburgh, Pittsburgh, PA; The National Institute of Advanced Industrial Science and Technology, Japan; Paro Robots U.S., Inc., Itasca, IL; Intuitive Surgical, Sunnyvale, CA; Corindus Vascular Robots, Natick, MA; Mako Surgical Corp., Ft. Lauderdale, FL; Omnilife science, Inc., East Taunton, MA; InTouch Health, Santa Barbara, CA; Rice University, Houston, TX; the University of Granada, Granada, Spain; Georgia Institute of Technology, Atlanta, GA; Stratasys, Inc., Eden Prairie, MN; and the Alfred I. duPont Hospital for Children, Wilmington, DE.